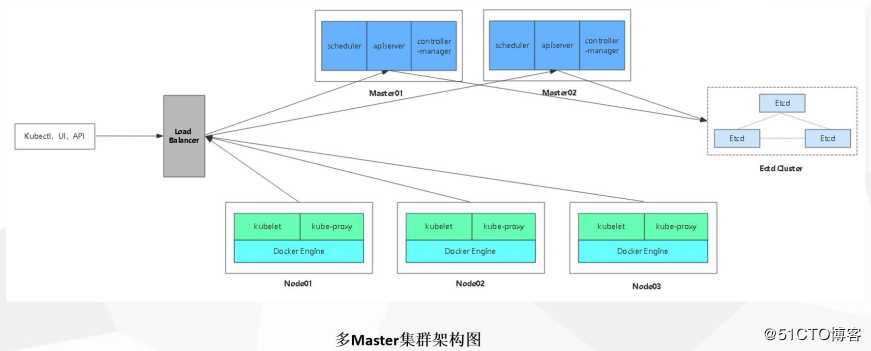

Kubernetes平台环境规划

负载均衡

Nginx1:192.168.13.128/24

Nginx2:192.168.13.129/24

Master节点

master1:192.168.13.131/24 kube-apiserver kube-controller-manager kube-scheduler etcd

master2:192.168.13.130/24 kube-apiserver kube-controller-manager kube-scheduler etcd

Node节点

node1:192.168.13.132/24 kubelet kube-proxy docker flannel etcd

node2:192.168.13.133/24 kubelet kube-proxy docker flannel etcd

1:自签ETCD证书 2:ETCD部署 3:Node安装docker 4:Flannel部署(先写入子网到etcd)-----------master---------------------------5:自签APIServer证书 6:部署APIServer组件(token,csv)7:部署controller-manager(指定apiserver证书)和scheduler组件 -------------node----------------------------------8:生成kubeconfig(bootstrap,kubeconfig和kube-proxy.kubeconfig)9:部署kubelet组件10:部署kube-proxy组件---------------加入群集-----------------11:kubectl get csr && kubectl certificate approve 允许办法证书,加入群集12:添加一个node节点13:查看kubectl get node 节点[root@master01 ~]# mkdir k8s[root@master01 ~]# cd k8s/[root@master01 k8s]# rz -E ##上传etcd脚本[root@master01 k8s]# lsetcd-cert.sh etcd.shvim etcd-cert.sh ##证书创建脚本内容cat > ca-config.json <<EOF{ "signing": { "default": { "expiry": "87600h" }, "profiles": { "www": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } }}EOFcat > ca-csr.json <<EOF{ "CN": "etcd CA", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ]}EOFcfssl gencert -initca ca-csr.json | cfssljson -bare ca -#-----------------------cat > server-csr.json <<EOF{ "CN": "etcd", "hosts": [ "10.206.240.188", "10.206.240.189", "10.206.240.111" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare servervim etcd.sh ##etcd服务脚本#!/bin/bash# example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380ETCD_NAME=$1ETCD_IP=$2ETCD_CLUSTER=$3WORK_DIR=/opt/etcdcat <<EOF >$WORK_DIR/cfg/etcd#[Member]ETCD_NAME="${ETCD_NAME}"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"EOFcat <<EOF >/usr/lib/systemd/system/etcd.service[Unit]Description=Etcd ServerAfter=network.targetAfter=network-online.targetWants=network-online.target[Service]Type=notifyEnvironmentFile=${WORK_DIR}/cfg/etcdExecStart=${WORK_DIR}/bin/etcd --name=\${ETCD_NAME} --data-dir=\${ETCD_DATA_DIR} --listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} --listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 --advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} --initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} --initial-cluster=\${ETCD_INITIAL_CLUSTER} --initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} --initial-cluster-state=new --cert-file=${WORK_DIR}/ssl/server.pem --key-file=${WORK_DIR}/ssl/server-key.pem --peer-cert-file=${WORK_DIR}/ssl/server.pem --peer-key-file=${WORK_DIR}/ssl/server-key.pem --trusted-ca-file=${WORK_DIR}/ssl/ca.pem --peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pemRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable etcdsystemctl restart etcd[root@master01 k8s]# mkdir etcd-cert ##创建证书目录[root@master01 k8s]# mv etcd-cert.sh etcd-cert ##将脚本放到目录中[root@master01 k8s]# vim cfssl.sh ##工具下载脚本curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfsslcurl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljsoncurl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfochmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo[root@master01 k8s]# bash cfssl.sh ##下载cfssl官方包[root@master01 k8s]# ls /usr/local/bin/cfssl cfssl-certinfo cfssljson##cfssl生成证书工具、cfssljson通过传入json文件生成证书、cfssl-certinfo查看证书信息[root@master01 k8s]# cd /root/k8s/etcd-cert/ ##切换到证书脚本目录下[root@master01 etcd-cert]# lsetcd-cert.sh##定义ca证书[root@master01 etcd-cert]# cat > ca-config.json <<EOF{ "signing": { "default": { "expiry": "87600h" }, "profiles": { "www": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ???? ] ? }? } ???????? }}EOF?##实现证书的签名[root@master01 etcd-cert]# cat > ca-csr.json <<EOF { "CN": "etcd CA", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ] } EOF[root@master01 etcd-cert]# lsca-config.json ca-csr.json etcd-cert.sh[root@master01 etcd-cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca - ##生产证书,生成ca-key.pem ca.pem2020/02/09 18:09:13 [INFO] generating a new CA key and certificate from CSR2020/02/09 18:09:13 [INFO] generate received request2020/02/09 18:09:13 [INFO] received CSR2020/02/09 18:09:13 [INFO] generating key: rsa-20482020/02/09 18:09:13 [INFO] encoded CSR2020/02/09 18:09:13 [INFO] signed certificate with serial number 443437184464842782624738198723332409563005728279[root@master01 etcd-cert]# lsca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-cert.sh##指定etcd三个节点之间的通信验证[root@master01 etcd-cert]#cat > server-csr.json <<EOF{ "CN": "etcd", "hosts": [ "192.168.13.131", ##三个主机的地址 "192.168.13.132", "192.168.13.133" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing" } ]}EOF[root@master01 etcd-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server##生成ETCD证书 server-key.pem server.pem[root@master01 etcd-cert]# cd /root/k8s/[root@master01 k8s]# rz -E ##将源码包放到k8s目录下[root@master01 k8s]# lsetcd-cert flannel-v0.10.0-linux-amd64.tar.gzetcd.sh kubernetes-server-linux-amd64.tar.gzetcd-v3.3.10-linux-amd64.tar.gz[root@master01 k8s]# tar zxvf etcd-v3.3.10-linux-amd64.tar.gz ##解压[root@master01 k8s]# cd etcd-v3.3.10-linux-amd64/[root@master01 etcd-v3.3.10-linux-amd64]# lsDocumentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md[root@master01 etcd-v3.3.10-linux-amd64]# mkdir /opt/etcd/{cfg,bin,ssl} -p ##创建配置文件,命令文件,证书工作目录[root@master01 etcd-v3.3.10-linux-amd64]# mv etcd etcdctl /opt/etcd/bin/ ##放置命令[root@master01 etcd-v3.3.10-linux-amd64]# cd ../etcd-cert/[root@master01 etcd-cert]# cp *.pem /opt/etcd/ssl/ ##证书拷贝[root@master01 etcd-cert]# ls /opt/etcd/ssl/ca-key.pem ca.pem server-key.pem server.pem[root@master01 etcd-cert]# cd ../[root@master01 k8s]# bash etcd.sh etcd01 192.168.13.131 etcd02=https://192.168.13.132:2380,etcd03=https://192.168.13.133:2380##执行etcd.sh服务脚本,进入卡住状态等待其他节点加入[root@master01 ~]# ps -ef | grep etcd##使用另外一个会话打开,会发现etcd进程已经开启[root@master01 ~]# systemctl stop firewalld.service ##关闭防火墙[root@master01 ~]# setenforce 0#######node节点的防火墙也需要关闭[root@node01 ~]# systemctl stop firewalld.service ##关闭防火墙[root@node01 ~]# setenforce 0#########[root@master01 k8s]# scp -r /opt/etcd/ root@192.168.13.132:/opt ##拷贝证书去其他node节点[root@master01 k8s]# scp -r /opt/etcd/ root@192.168.13.133:/opt##启动脚本拷贝其他node节点[root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service root@192.168.13.132:/usr/lib/systemd/system/ [root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service root@192.168.13.133:/usr/lib/systemd/system/#########修改node01的etcd配置文件#########[root@node01 ~]# vim /opt/etcd/cfg/etcd#[Member]ETCD_NAME="etcd02" ##修改名称ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.13.132:2380" ##地址ETCD_LISTEN_CLIENT_URLS="https://192.168.13.132:2379" ##地址修改#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.13.132:2380" ##地址修改ETCD_ADVERTISE_CLIENT_URLS="https://192.168.13.132:2379" ##地址修改ETCD_INITIAL_CLUSTER="etcd01=https://192.168.13.131:2380,etcd02=https://192.168.13.132:2380,etcd03=https://192.168.13.133:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"#########修改node02的etcd配置文件#########[root@node02 ~]# vim /opt/etcd/cfg/etcd#[Member]ETCD_NAME="etcd03" ##修改名称ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.13.133:2380" ##修改地址ETCD_LISTEN_CLIENT_URLS="https://192.168.13.133:2379" ##修改地址#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.13.133:2380" ##修改地址ETCD_ADVERTISE_CLIENT_URLS="https://192.168.13.133:2379" ##修改地址ETCD_INITIAL_CLUSTER="etcd01=https://192.168.13.131:2380,etcd02=https://192.168.13.132:2380,etcd03=https://192.168.13.133:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"#########在master01上启动脚本等待节点加入#########[root@master01 k8s]# bash etcd.sh etcd01 192.168.13.131 etcd02=https://192.168.13.132:2380,etcd03=https://192.168.13.133:2380#########在node上启动etcd服务#########[root@node01 ~]# systemctl start etcd.service [root@node02 ~]# systemctl start etcd.service#########在master01上检查群集状态#########[root@master01 k8s]# cd etcd-cert/ ##切换到证书的目录下[root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.13.131:2379,https://192.168.13.132:2379,https://192.168.13.133:2379" cluster-health##检查群集状态member 76e0a15c7cd72ef7 is healthy: got healthy result from https://192.168.13.133:2379member cbcfa6e700d4aa11 is healthy: got healthy result from https://192.168.13.132:2379member e4f560fae6a18df3 is healthy: got healthy result from https://192.168.13.131:2379cluster is healthy[root@node01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2 ##安装依赖包[root@node01 ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo##设置阿里云镜像源[root@node01 ~]# yum install -y docker-ce ##安装docker[root@node01 ~]# systemctl start docker.service[root@node01 ~]# systemctl enable docker.service[root@node01 ~]# tee /etc/docker/daemon.json <<-‘EOF‘ ##容器加速 { "registry-mirrors": ["https://3a9s8zx5.mirror.aliyuncs.com"] } EOF[root@node01 ~]# systemctl daemon-reload ##重载[root@node01 ~]# systemctl restart docker[root@node01 ~]# vim /etc/sysctl.conf net.ipv4.ip_forward=1 ##开启路由转发[root@node01 ~]# sysctl -p ##重载[root@node01 ~]# service network restart [root@node01 ~]# systemctl restart docker [root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.13.131:2379,https://192.168.13.132:2379,https://192.168.13.133:2379" set /coreos.com/network/config ‘{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}‘##写入分配的子网段到ETCD中,供flannel使用,网络为172.17.0.0[root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.13.131:2379,https://192.168.13.132:2379,https://192.168.13.133:2379" get /coreos.com/network/config##get查看写入的信息[root@master01 etcd-cert]# cd ../##拷贝到所有node节点(只需要部署在node节点即可)[root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz root@192.168.13.132:/root [root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz root@192.168.13.133:/root#########在所有node节点上部署安装flannel###########[root@node01 ~]# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz ##解压flanneldmk-docker-opts.shREADME.md[root@node01 ~]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p[root@node01 ~]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/[root@node01 ~]# rz -E ##上传flannel脚本文件vim flannel.sh ##编辑flannel配置文件个启动服务的脚本#!/bin/bashETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}cat <<EOF >/opt/kubernetes/cfg/flanneldFLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"EOFcat <<EOF >/usr/lib/systemd/system/flanneld.service[Unit]Description=Flanneld overlay address etcd agentAfter=network-online.target network.targetBefore=docker.service[Service]Type=notifyEnvironmentFile=/opt/kubernetes/cfg/flanneldExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONSExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.envRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable flanneldsystemctl restart flanneld[root@node01 ~]# bash flannel.sh https://192.168.13.131:2379,https://192.168.13.132:2379,https://192.168.13.133:2379##开启flannel网络功能[root@node01 ~]# vim /usr/lib/systemd/system/docker.service ##修改服务启动文件 13 # for containers run by docker 14 EnvironmentFile=/run/flannel/subnet.env ##添加此项 15 ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/ru n/containerd/containerd.sock ##引用参数[root@node01 ~]# cat /run/flannel/subnet.env ##查看子网段信息DOCKER_OPT_BIP="--bip=172.17.45.1/24"DOCKER_OPT_IPMASQ="--ip-masq=false"DOCKER_OPT_MTU="--mtu=1450"DOCKER_NETWORK_OPTIONS=" --bip=172.17.45.1/24 --ip-masq=false --mtu=1450"[root@node01 ~]# systemctl daemon-reload ##重载docker[root@node01 ~]# systemctl restart docker[root@node01 ~]# ifconfig ##此时docker0网关为172.17.45.1,flannel是虚拟网络[root@node01 ~]# docker run -it centos:7 /bin/bash ##安装centos7并进入容器[root@720f2727f307 /]# yum install net-tools -y ##安装网络工具[root@720f2727f307 /]# ifconfig ##容器的地址为172.17.45.2##############node2和node1一样的配置################node2的docker0地址为172.17.1.1[root@node02 ~]# docker run -it centos:7 /bin/bash ##开启容器并进入容器[root@c2cfc9af3b9f /]# yum install -y net-tools[root@c2cfc9af3b9f /]# ifconfig ##容器地址为172.17.1.2[root@c2cfc9af3b9f /]# ping 172.17.45.2 ##测试flannel网络是否互通[root@master01 k8s]# rz -E ##上传master脚本压缩包[root@master01 k8s]# lsmaster.zip[root@master01 k8s]# unzip master.zip ##解压Archive: master.zip inflating: apiserver.sh inflating: controller-manager.sh inflating: scheduler.sh[root@master01 k8s]# chmod +x controller-manager.sh ##给执行权限[root@master01 k8s]# mkdir k8s-cert ##apiserver自签证书目录[root@master01 k8s]# cd k8s-cert/[root@master01 k8s-cert]# rz -E[root@master01 k8s-cert]# ls ##上传k8s证书脚本k8s-cert.shvim k8s-cert.sh ##api证书脚本cat > ca-config.json <<EOF{ "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } }}EOFcat > ca-csr.json <<EOF{ "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -initca ca-csr.json | cfssljson -bare ca -#-----------------------cat > server-csr.json <<EOF{ "CN": "kubernetes", "hosts": [ "10.0.0.1", "127.0.0.1", "192.168.13.131", //master1 "192.168.13.130", //master2 "192.168.13.100", //vip 公共访问入口 "192.168.13.128", //lb负载均衡 (master) "192.168.13.129", //lb负载均衡(backup) "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server#-----------------------cat > admin-csr.json <<EOF{ "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin#-----------------------cat > kube-proxy-csr.json <<EOF{ "CN": "system:kube-proxy", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy[root@master01 k8s-cert]# bash k8s-cert.sh ##执行脚本[root@master01 k8s-cert]# ls *pem ##生成8个证书admin-key.pem ca-key.pem kube-proxy-key.pem server-key.pemadmin.pem ca.pem kube-proxy.pem server.pem[root@master01 k8s-cert]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p ##创建工作目录[root@master01 k8s-cert]# cp ca*pem server*pem /opt/kubernetes/ssl/ ##证书复制到工作目录[root@master01 k8s-cert]# cd ..[root@master01 k8s]# tar zxvf kubernetes-server-linux-amd64.tar.gz ##解压[root@master01 k8s]# cd kubernetes/server/bin/ ##查看工具[root@master01 bin]# lsapiextensions-apiserver kube-controller-manager.tarcloud-controller-manager kubectlcloud-controller-manager.docker_tag kubeletcloud-controller-manager.tar kube-proxyhyperkube kube-proxy.docker_tagkubeadm kube-proxy.tarkube-apiserver kube-schedulerkube-apiserver.docker_tag kube-scheduler.docker_tagkube-apiserver.tar kube-scheduler.tarkube-controller-manager mounterkube-controller-manager.docker_tag[root@master01 bin]# cp kube-apiserver kubectl kube-scheduler kube-controller-manager /opt/kubernetes/bin/##将master的组件拷贝到工作目录下[root@master01 bin]# head -c 16 /dev/urandom | od -An -t x | tr -d ‘ ‘ ##随机生成序列号b555625c736044a609cf020902e773fa[root@master01 bin]# vim /opt/kubernetes/cfg/token.csv ##编辑token角色b555625c736044a609cf020902e773fa,kubelet-bootstrap,10001,"system:kubelet-bootstrap"##序列号,用户名,id,角色vim /root/k8s/apiserver.sh ##查看apiserver脚本#!/bin/bashMASTER_ADDRESS=$1ETCD_SERVERS=$2cat <<EOF >/opt/kubernetes/cfg/kube-apiserverKUBE_APISERVER_OPTS="--logtostderr=true \--v=4 \--etcd-servers=${ETCD_SERVERS} \--bind-address=${MASTER_ADDRESS} \--secure-port=6443 \--advertise-address=${MASTER_ADDRESS} \--allow-privileged=true \--service-cluster-ip-range=10.0.0.0/24 \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,Res ourceQuota,NodeRestriction \--authorization-mode=RBAC,Node \--kubelet-https=true \--enable-bootstrap-token-auth \--token-auth-file=/opt/kubernetes/cfg/token.csv \--service-node-port-range=30000-50000 \--tls-cert-file=/opt/kubernetes/ssl/server.pem \--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \--client-ca-file=/opt/kubernetes/ssl/ca.pem \--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \--etcd-cafile=/opt/etcd/ssl/ca.pem \--etcd-certfile=/opt/etcd/ssl/server.pem \--etcd-keyfile=/opt/etcd/ssl/server-key.pem"EOFcat <<EOF >/usr/lib/systemd/system/kube-apiserver.service[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserverExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-apiserversystemctl restart kube-apiserver[root@master01 bin]# cd /root/k8s/[root@master01 k8s]# bash apiserver.sh 192.168.13.131 https://192.168.13.131:2379,https://192.168.13.132:2379,https://192.168.13.133:2379##开启apiserver前面是本地地址,后面是etcd群集的地址[root@master01 k8s]# ps aux | grep kube ##检查进程是否启动成功[root@master01 k8s]# cat /opt/kubernetes/cfg/kube-apiserver ##查看配置文件[root@master01 k8s]# netstat -ntap | grep 6443 ##查看https的端口tcp 0 0 192.168.13.131:6443 0.0.0.0:* LISTEN 38191/kube-apiserve tcp 0 0 192.168.13.131:6443 192.168.13.131:34900 ESTABLISHED 38191/kube-apiserve tcp 0 0 192.168.13.131:34900 192.168.13.131:6443 ESTABLISHED 38191/kube-apiserve [root@master01 k8s]# netstat -ntap | grep 8080 ##查看8080端口tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 38191/kube-apiserve vim scheduler.sh ##调度的脚本#!/bin/bashMASTER_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-schedulerKUBE_SCHEDULER_OPTS="--logtostderr=true \--v=4 \--master=${MASTER_ADDRESS}:8080 \--leader-elect"EOFcat <<EOF >/usr/lib/systemd/system/kube-scheduler.service[Unit]Description=Kubernetes SchedulerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-schedulerExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-schedulersystemctl restart kube-scheduler[root@master01 k8s]# ./scheduler.sh 127.0.0.1 ##开启scheduler服务vim controller-manager.sh ##控制管理脚本#!/bin/bashMASTER_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-controller-managerKUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \--v=4 \--master=${MASTER_ADDRESS}:8080 \--leader-elect=true \--address=127.0.0.1 \--service-cluster-ip-range=10.0.0.0/24 \--cluster-name=kubernetes \--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \--root-ca-file=/opt/kubernetes/ssl/ca.pem \--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \--experimental-cluster-signing-duration=87600h0m0s"EOFcat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service[Unit]Description=Kubernetes Controller ManagerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-managerExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-controller-managersystemctl restart kube-controller-manager[root@master01 k8s]# ./controller-manager.sh 127.0.0.1 ##启动controller-manager[root@master01 k8s]# /opt/kubernetes/bin/kubectl get cs ##查看master 节点状态NAME STATUS MESSAGE ERRORscheduler Healthy ok controller-manager Healthy ok etcd-2 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"}##在master01上操作[root@master01 k8s]# cd kubernetes/server/bin/##把 kubelet、kube-proxy拷贝到node节点上去[root@master01 bin]# scp kubelet kube-proxy root@192.168.13.132:/opt/kubernetes/bin/[root@master01 bin]# scp kubelet kube-proxy root@192.168.13.133:/opt/kubernetes/bin/##在node节点上操作[root@node01 ~]# rz -E ##上传node脚本压缩包[root@node01 ~]# unzip node.zip ##解压Archive: node.zip inflating: proxy.sh inflating: kubelet.sh##在master01上操作[root@master01 bin]# cd /root/k8s/[root@master01 k8s]# mkdir kubeconfig ##创建目录[root@master01 k8s]# cd kubeconfig/[root@master01 kubeconfig]# rz -E ##上传kubeconfig脚本[root@master01 kubeconfig]# lskubeconfig.sh[root@master01 kubeconfig]# cat /opt/kubernetes/cfg/token.csv b555625c736044a609cf020902e773fa,kubelet-bootstrap,10001,"system:kubelet-bootstrap"##复制序列号进行脚本修改vim kubeconfig.sh ##脚本信息##token部分要删除APISERVER=$1SSL_DIR=$2# 创建kubelet bootstrapping kubeconfig export KUBE_APISERVER="https://$APISERVER:6443"# 设置集群参数kubectl config set-cluster kubernetes --certificate-authority=$SSL_DIR/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=bootstrap.kubeconfig# 设置客户端认证参数kubectl config set-credentials kubelet-bootstrap --token=b555625c736044a609cf020902e773fa \ ##修改序列号 --kubeconfig=bootstrap.kubeconfig# 设置上下文参数kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=bootstrap.kubeconfig# 设置默认上下文kubectl config use-context default --kubeconfig=bootstrap.kubeconfig#----------------------# 创建kube-proxy kubeconfig文件kubectl config set-cluster kubernetes --certificate-authority=$SSL_DIR/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-proxy.kubeconfigkubectl config set-credentials kube-proxy --client-certificate=$SSL_DIR/kube-proxy.pem --client-key=$SSL_DIR/kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfigkubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfigkubectl config use-context default --kubeconfig=kube-proxy.kubeconfig[root@master01 kubeconfig]# vim /etc/profile ##修改环境变量export PATH=$PATH:/opt/kubernetes/bin/[root@master01 kubeconfig]# source /etc/profile ##刷新配置文件[root@master01 kubeconfig]# bash kubeconfig 192.168.13.131 /root/k8s/k8s-cert/ ##生成配置文件[root@master01 kubeconfig]# lsbootstrap.kubeconfig kubeconfig kube-proxy.kubeconfig[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.13.132:/opt/kubernetes/cfg/##拷贝配置文件到node节点[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.13.133:/opt/kubernetes/cfg/[root@master01 kubeconfig]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap##创建bootstrap角色赋予权限用于连接apiserver请求签名(关键)#####在node01上操作#######vim kubelet.sh 脚本信息#!/bin/bashNODE_ADDRESS=$1DNS_SERVER_IP=${2:-"10.0.0.2"}cat <<EOF >/opt/kubernetes/cfg/kubeletKUBELET_OPTS="--logtostderr=true \--v=4 \--hostname-override=${NODE_ADDRESS} \--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \--config=/opt/kubernetes/cfg/kubelet.config \--cert-dir=/opt/kubernetes/ssl \--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"EOFcat <<EOF >/opt/kubernetes/cfg/kubelet.configkind: KubeletConfigurationapiVersion: kubelet.config.k8s.io/v1beta1address: ${NODE_ADDRESS}port: 10250readOnlyPort: 10255cgroupDriver: cgroupfsclusterDNS:- ${DNS_SERVER_IP} clusterDomain: cluster.local.failSwapOn: falseauthentication: anonymous: enabled: trueEOFcat <<EOF >/usr/lib/systemd/system/kubelet.service[Unit]Description=Kubernetes KubeletAfter=docker.serviceRequires=docker.service[Service]EnvironmentFile=/opt/kubernetes/cfg/kubeletExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTSRestart=on-failureKillMode=process[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kubeletsystemctl restart kubelet[root@node01 ~]# bash kubelet.sh 192.168.13.132 ##执行脚本[root@node01 ~]# ps aux | grep kube ##查看服务启动状态##########在master01上操作############[root@master01 kubeconfig]# kubectl get csr ##查看证书状态NAME AGE REQUESTOR CONDITIONnode-csr-PvqJh9Nza5SyPUakuwOkiMUsh7zo3ZG9vw3OTNtlkgg 73s kubelet-bootstrap Pending ##等待集群给该节点颁发证书[root@master01 kubeconfig]# kubectl certificate approve node-csr-PvqJh9Nza5SyPUakuwOkiMUsh7zo3ZG9vw3OTNtlkgg[root@master01 kubeconfig]# kubectl get csr ##查看证书状态NAME AGE REQUESTOR CONDITIONnode-csr-PvqJh9Nza5SyPUakuwOkiMUsh7zo3ZG9vw3OTNtlkgg 3m34s kubelet-bootstrap Approved,Issued##已经被允许加入群集[root@master01 kubeconfig]# kubectl get node ##查看群集节点,成功加入node01节点NAME STATUS ROLES AGE VERSION192.168.13.132 Ready <none> 115s v1.12.3vim proxy.sh ##proxy脚本#!/bin/bashNODE_ADDRESS=$1cat <<EOF >/opt/kubernetes/cfg/kube-proxyKUBE_PROXY_OPTS="--logtostderr=true \--v=4 \--hostname-override=${NODE_ADDRESS} \--cluster-cidr=10.0.0.0/24 \--proxy-mode=ipvs \--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"EOFcat <<EOF >/usr/lib/systemd/system/kube-proxy.service[Unit]Description=Kubernetes ProxyAfter=network.target[Service]EnvironmentFile=-/opt/kubernetes/cfg/kube-proxyExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOFsystemctl daemon-reloadsystemctl enable kube-proxysystemctl restart kube-proxy##在node01节点操作[root@node01 ~]# bash proxy.sh 192.168.13.132 ##启动proxy服务[root@node01 ~]# systemctl status kube-proxy.service ##查看服务状态##在node01上操作[root@node01 ~]# scp -r /opt/kubernetes/ root@192.168.13.133:/opt/ //把现成的/opt/kubernetes目录复制到其他节点进行修改即可[root@node01 ~]# scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@192.168.13.133:/usr/lib/systemd/system///把kubelet,kube-proxy的service文件拷贝到node2中##在node02上操作,进行修改[root@node02 ~]# cd /opt/kubernetes/ssl/ ##切换到证书目录下[root@node02 ssl]# lskubelet-client-2020-02-10-00-10-11.pem kubelet.crtkubelet-client-current.pem kubelet.key[root@node02 ssl]# rm -rf * ##首先删除复制过来的证书,等会node02会自行申请证书[root@node02 ssl]# cd ../cfg/ ##修改配置文件kubelet kubelet.config kube-proxy(三个配置文件)[root@node02 cfg]# vim kubelet --hostname-override=192.168.13.133 \ ##修改地址[root@node02 cfg]# vim kubelet.configaddress: 192.168.13.133 ##修改地址[root@node02 cfg]# vim kube-proxy--hostname-override=192.168.13.133 \ ##修改地址[root@node02 cfg]# systemctl start kubelet.service ##启动kubelet服务[root@node02 cfg]# systemctl enable kubelet.service [root@node02 cfg]# systemctl start kube-proxy.service ##启动kube-proxy服务[root@node02 cfg]# systemctl enable kube-proxy.service##在master01上操作[root@master01 k8s]# kubectl get csr ##查看请求NAME AGE REQUESTOR CONDITIONnode-csr-PvqJh9Nza5SyPUakuwOkiMUsh7zo3ZG9vw3OTNtlkgg 23m kubelet-bootstrap Approved,Issuednode-csr-qE6kNPzFp6dducllhsQucd-3PJQA5t7eVf-xNkx48MA 103s kubelet-bootstrap Pending[root@master01 k8s]# kubectl certificate approve node-csr-qE6kNPzFp6dducllhsQucd-3PJQA5t7eVf-xNkx48MA//授权许可加入群集[root@master01 k8s]# kubectl get node ##查看群集中的节点NAME STATUS ROLES AGE VERSION192.168.13.132 Ready <none> 21m v1.12.3192.168.13.133 Ready <none> 70s v1.12.3